Key Points:

- Security and privacy concerns: Increased use of AI systems raises issues like data manipulation, model vulnerabilities, and information leaks.

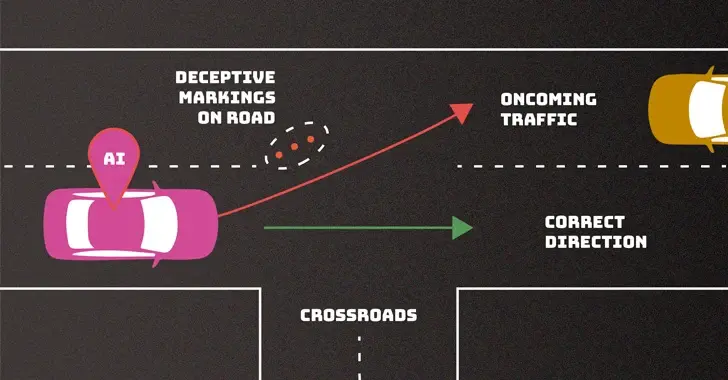

- Threats at various stages: Training data, software, and deployment are all vulnerable to attacks like poisoning, data breaches, and prompt injection.

- Attacks with broad impact: Availability, integrity, and privacy can all be compromised by evasion, poisoning, privacy, and abuse attacks.

- Attacker knowledge varies: Threats can be carried out by actors with full, partial, or minimal knowledge of the AI system.

- Mitigation challenges: Robust defenses are currently lacking, and the tech community needs to prioritize their development.

- Global concern: NIST’s warning echoes recent international guidelines emphasizing secure AI development.

Overall:

NIST identifies serious security and privacy risks associated with the rapid deployment of AI systems, urging the tech industry to develop better defenses and implement secure development practices.

Comment:

From the look of things, it looks like it’s going to get worse before it gets better.

That’s a fair point, but if AI is not better or at least equivalent to a competent human driver.

why are we even allowing it?

“Bad drivers” have rights … AI doesn’t and it creates potential risks to others

We aren’t allowing it.

No doubt that AI which is used for Level 5 autonomy should be trained to detect these situations and make the correct decision. Otherwise they wouldn’t be Level 5 systems. This is one of the many reasons why self-driving cars is not a solved issue yet. The systems we use today are either used strictly as a driving aid under close supervision by a human driver or used in small areas that the AI has been already evaluated to perform well in.