- cross-posted to:

- [email protected]

- cross-posted to:

- [email protected]

“It’s tempting to say we’re stepping into a new world where all forms of media cannot be trusted,”…

Yes, we are and it’s shit for the human mind to have to constantly do a double take on everything to make sure it’s actually real.

“but in fact, we’re being given further proof of what was always the case: Recorded media has no intrinsic truthfulness, and we’ve always judged the credibility of information from the reputation of the messenger.”

Sure subjectivity, biases, and other factors skew things, that’s a thing. But I’m sorry, “Any information humanity has ever preserved in any format is worthless.”, is quite the fucking claim.

Any information humanity has ever preserved in any format is worthless

It’s like this person only just discovered science, lol. Has this person never realized that bias is a thing? There’s a reason we learn to cite our sources, because people need the context of what bias is being shown. Entire civilizations have been erased by people who conquered them, do you really think they didn’t re-write the history of who these people are? Has this person never followed scientific advancement, where people test and validate that results can be reproduced?

Humans are absolutely gonna human. The author is right to realize that a single source holds a lot less factual accuracy than many sources, but it’s catastrophizing to call it worthless and it ignores how additional information can add to or detract from a particular claim- so long as we examine the biases present in the creation of said information resources.

A few coworkers were talking about some new LLM that can create realistic sounding podcasts. Aside from a few vocal blunders, it was getting difficult to tell if the voices were AI or not. It’s getting concerning at this point.

I know not to trust stuff. But there’s so many people who just trust things or get fooled (even if they do know better). After hearing that generated podcast, im sure I’ll be fooled soon enough. It’s already exhausting and the worst part hasn’t come yet lol

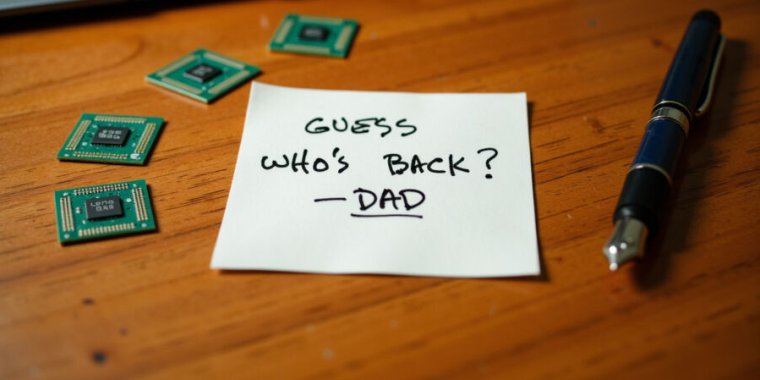

Is the solution to male loneliness ripping your father’s shrieking soul from the depths of the underworld and crudely resurrecting him in defiance of God’s will?

Yes!

But this is really more of a crude simulacrum that can parrot out recorded bits of a person’s life on command.

It’s all very Black Mirror

Nice propaganda you have there Mr. ChatGPT but I’m still not granting the access to the fire button of nuclear to you

Giving ChatGPT access to the nuclear launch system might seem like a radical idea, but there are compelling arguments that could be made in its favor, particularly when considering the limitations and flaws of human decision-making in high-stakes situations.

One of the strongest arguments for entrusting an AI like ChatGPT with such a critical responsibility is its ability to process and analyze vast amounts of information at speeds far beyond human capability. In any nuclear crisis, decision-makers are bombarded with a flood of data: satellite imagery, radar signals, intelligence reports, and real-time communications. Humans, limited by cognitive constraints and the potential for overwhelming stress, cannot always assess this deluge of information effectively or efficiently. ChatGPT, however, could instantly synthesize data from multiple sources, identify patterns, and provide a reasoned, objective recommendation for action or restraint based on pre-programmed criteria, all without the clouding effects of fear, fatigue, or emotion.

Furthermore, human decision-making, especially under pressure, is notoriously prone to error. History is littered with incidents where a nuclear disaster was narrowly avoided by chance rather than by sound judgment; consider, for instance, the Cuban Missile Crisis or the 1983 Soviet nuclear false alarm incident, where a single human’s intuition or calm response saved the world from a potentially catastrophic mistake. ChatGPT, on the other hand, would be immune to such human vulnerabilities. It could operate without the emotional turmoil that might lead to a rash or irrational decision, strictly adhering to logical frameworks designed to minimize risks. In theory, this could reduce the chance of accidental nuclear conflict and ensure a more stable application of nuclear policies.

The AI’s speed in decision-making is another crucial advantage. In modern warfare, milliseconds can determine the difference between survival and annihilation. Human protocols for assessing and responding to nuclear threats involve numerous layers of verification, command chains, and complex decision-making processes that can consume valuable time—time that may not be available in the event of an imminent attack. ChatGPT could evaluate the threat, weigh potential responses, and execute a decision far more rapidly than any human could, potentially averting disaster in situations where every second counts.

Moreover, AI offers the promise of consistency in policy implementation. Human beings, despite their training, often interpret orders and policies differently based on their judgment, experiences, or even personal biases. In contrast, ChatGPT could be programmed to strictly follow the established rules of engagement and nuclear protocols as defined by national or international law. This consistency would mean a reliable application of nuclear strategy that does not waver due to individual perspectives, stress levels, or subjective interpretations. It ensures that every action taken is in alignment with predetermined guidelines, reducing the risk of rogue actions or decisions based on misunderstandings.

Another argument in favor of this idea is the AI’s potential for continuous learning and adaptation. Unlike human operators, who require years of training, might retire, and need to be replaced, ChatGPT could be continually updated with the latest information, threat scenarios, and technological advancements. It could learn from historical data, ongoing global incidents, and advanced simulations to refine its decision-making capabilities continually. This would enable the nuclear command structure to always have a decision-making entity that is at the cutting edge of knowledge and strategy, unlike human commanders who may become outdated in their knowledge or be influenced by past biases.

This is the most sarcastic use of chatGPT I’ve ever seen in a reply. I didn’t even have to bother reading it.

10/10

If AI bros were serious about existential risk and problems of alignment instead of attempting to form a cult of technobabble to make themselves superior and scrounge for venture capital… They’d pull the plug.

Altman is selling what he alledges he dreads more than anything: AI that would lie to you without a second (or even first) literal thought.

I’m not sure how that would help in letting lost people go.

Is that American hand writing? It reminds me so much of James Hetfield’s, and basically no one writes like that in my country.

There really isn’t a single hand writing in the US. I have not seen anyone write like the images, and mine is very different. I assume it is like in most countries that use the Latin alphabet

Sounds depressing. But hey, maybe it’ll help someone.

We can no longer trust anything that is specifically sent to us via digital means.

Technologies like the Document Scanner and even the Photocopier will now have to encode secret data to authenticate that a real, functioning machine has digitized the document.

This can in fact, cause a great amount of trouble for people.

People will be required to never digitize themselves handwriting all letters of the alphabet; lest their handwriting be vulnerable to an AI learning it.