I used to be the Security Team Lead for Web Applications at one of the largest government data centers in the world but now I do mostly “source available” security mainly focusing on BSD. I’m on GitHub but I run a self-hosted Gogs (which gitea came from) git repo at Quadhelion Engineering Dev.

Well, on that server I tried to deny AI with Suricata, robots.txt, “NO AI” Licenses, Human Intelligence (HI) License links in the software, “NO AI” comments in posts everywhere on the Internet where my software was posted. Here is what I found today after having correlated all my logs of git clones or scrapes and traced them all back to IP/Company/Server.

Formerly having been loathe to even give my thinking pattern to a potential enemy I asked Perplexity AI questions specifically about BSD security, a very niche topic. Although there is a huge data pool here in general over many decades, my type of software is pretty unique, is buried as it does not come up on a GitHub search for BSD Security for two pages which is all most users will click, is very recent comparitively to the “dead pool” of old knowledge, and is fairly well recieved, yet not generally popular so GitHub Traffic Analysis is very useful.

The traceback and AI result analysis shows the following:

- GitHub cloning vs visitor activity in the Traffic tab DOES NOT MATCH any useful pattern for me the Engineer. Likelyhood of AI training rough estimate of my own repositories: 60% of clones are AI/Automata

- GitHub README.md is not licensable material and is a public document able to be trained on no matter what the software license, copyright, statements, or any technical measures used to dissuade/defeat it. a. I’m trying to see if tracking down whether any README.md no matter what the context is trainable; is a solvable engineering project considering my life constraints.

- Plagarisation of technical writing: Probable

- Theft of programming “snippets” or perhaps “single lines of code” and overall logic design pattern for that solution: Probable

- Supremely interesting choice of datasets used vs available, in summary use, but also checking for validation against other software and weighted upon reputation factors with “Coq” like proofing, GitHub “Stars”, Employer History?

- Even though I can see my own writing and formatting right out of my README.md the citation was to “Phoronix Forum” but that isn’t true. That’s like saying your post is “Tick Tock” said. I wrote that, a real flesh and blood human being took comparitvely massive amounts of time to do that. My birthname is there in the post 2 times [EDIT: post signature with my name no longer? Name not in “about” either hmm], in the repo, in the comments, all over the Internet.

[EDIT continued] Did it choose the Phoronix vector to that information because it was less attributable? It found my other repos in other ways. My Phoronix handle is the same name as GitHub username, where my handl is my name, easily inferable in any, as well as a biography link with my fullname in the about.[EDIT cont end]

You should test this out for yourself as I’m not going to take days or a week making a great presentation of a technical case. Check your own niche code, a specific code question of application, or make a mock repo with super niche stuff with lots of code in the README.md and then check it against AI every day until you see it.

P.S. I pulled up TabNine and tried to write Ruby so complicated and magically mashed, AI could offer me nothing, just as an AI obsucation/smartness test. You should try something similar to see what results you get.

Anything you put publicly on the internet in a well known format is likely to end up in a training set. It hasn’t been decided legally yet, but it’s very likely that training a model will fall under fair use. Commercial solutions go a step further and prevent exact 1:1 reproductions, which would likely settle any ambiguity. You can throw anti-AI licenses on it, but until it’s determined to be a violation of copyright, it is literally meaningless.

Also if you just hope to spam tab with any of the AI code generators and get good results, you’re not. That’s not how those work. Saying something like this just shows the world that you have no idea how to use the tool, not the quality of the tool itself. AI is a useful tool, it’s not a magic bullet.

I think that training models for fair use purposes, like education, not commercialization, will also fall under fair use. But even so, it’s very difficult to prove that someone has trained their model on your data without a license, so as long as it’s available, I’m sure that it’ll be used.

And this is why AI needs to be banned from use. People own the things they post / place them under various licenses, and AI coming along and taking what you did is a blatant violation of copyright, ownership, trust, and is just general theft.

I am absolutely angry with the concept of AI and have campaigned against its use and written at length, many times, to every company that believes it’s allowed to scour the internet for training data for its highly flawed, often incorrect, sometimes dangerous AI garbage. To hell with that and to hell with anyone who supports AI.

It hasn’t been decided in court yet, but it’s likely that AI training won’t be a considered copyright violation, especially if there is a measure in place to prevent exact 1:1 reproductions of the training material.

But even then, how is the questionable choices of some LLM trainers reason to ban all AI? There are some models that are trained exclusively on material that is explicitly licensed for this purpose. There’s nothing legally or morally dubious about training an LLM if the training material is all properly licensed, right?

Sounds like AI or an AI influencer post. The first paragaph is so far off-topic, might as well be talking about sailing. You completely mis-understood what I meant using TabNine. I wrote my own code and obfuscated my own code. Then tried to have AI complete another function using my code.

Nothing you said is relevant is any way, shape, or form.

[EDIT} https://www.tabnine.com/

My guy, your posts are particularly hard to follow, and you are very very quick to jump to the conclusion that you’re somehow being targeted and under attack. It’s no surprise that people aren’t responding to what you think is appropriate for them to respond to.

You’ve gone out of your way to provide extra info about irrelevant details: Why does the particular flavor of git you use matter at all to this conversation beyond the fact that you self host, why does it matter that you are on github as well when we are specifically discussing things you believe were sourced from readme.mds you have self hosted?

Meanwhile you don’t give many details or explanation about the core thing you are trying to discuss, seemingly expecting people to be able to just follow your ramblings.

Edit: After having re-read your OP, it’s less messy than I initially thought, but jesus christ man you need to work on arranging your points better. It shouldn’t take reading your main post, a few of your comments, and the main post again to get your point: “AI data scrapers appear to treat readme files as public data regardless of any anti-AI precautions or licensing you’ve tried to apply, and they appear to not only grab from github bit also from self-hosted git repositories.”

Seriously. OP might have a legitimate point but they’re making it with the energy of someone trying to convince me that vole people live in the antiposition of the time cube.

I agree with you that they have consumed far more of the internet than they let on. That scrapers are shoving just everything into these regardless of legality or consent. Its messed up. Once more if the world wasn’t just a concrete jungle this could probably be a great ubiquitous tool in a faster and safer manner than it is now.

Hey Elias, found some confounding info: looks like Perplexity AI doesn’t respect the methods of blocking scrapers through robots.txt so this might just be an issue with them specifically being assholes.

Couldn’t figure out how to tag you in a comment on the other post, so I’ll edit this comment in a moment with the link.

Thanks for all the comments affirming my hard working planned 6 month AI honeypot endeavouring to be a threat to anything that even remotely has the possibility of becoming anti-human. It was in my capability and interest to do, so I did it. This phase may pass and we won’t have to worry, but we aren’t there yet, I believe.

I did some more digging in Perplexity on niche security but this is tangential and speculative un-like my previous evidenced analysis, but I do think I’m on to something and maybe others can help me crack it.

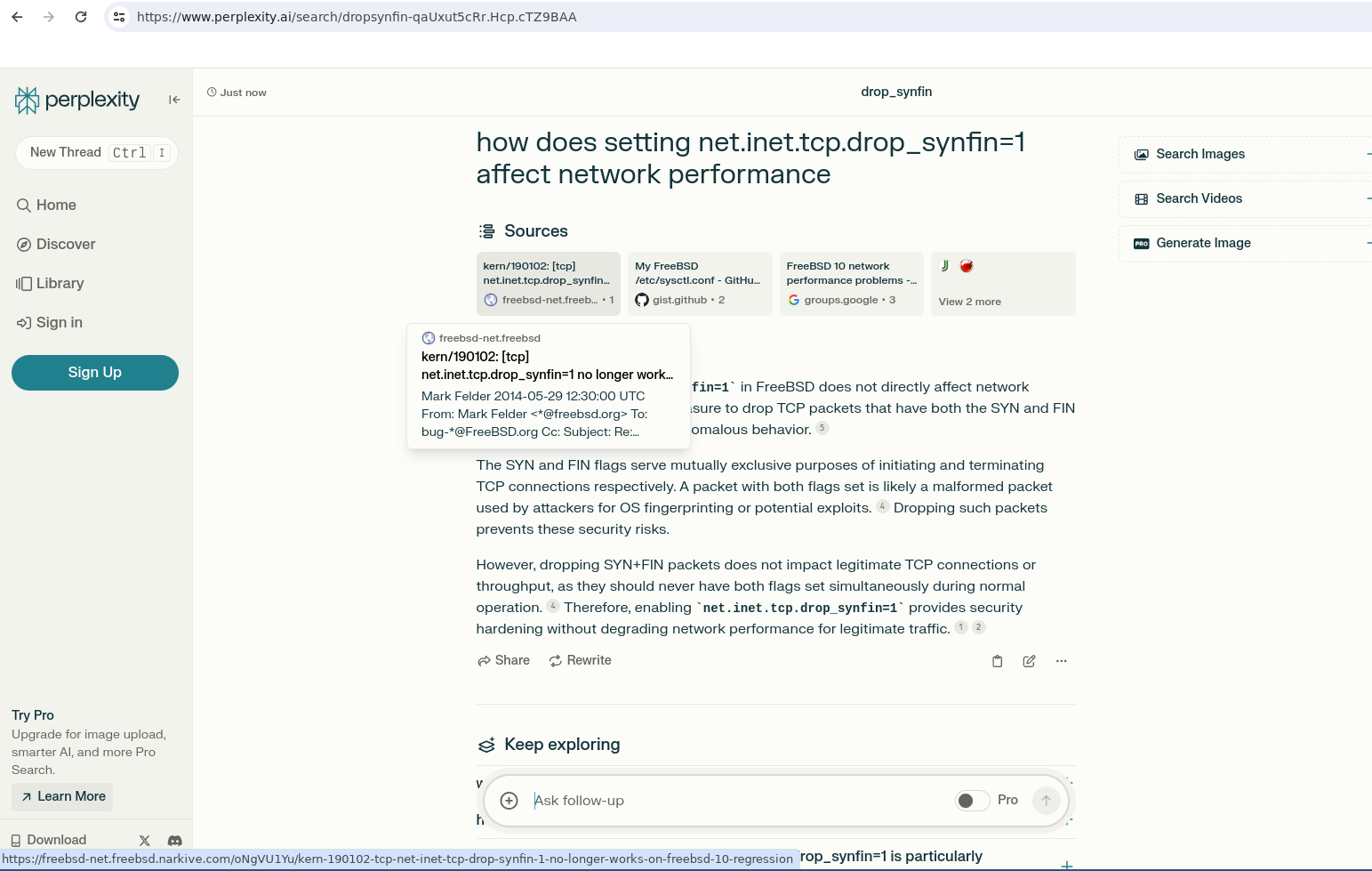

I wrote this nice article https://www.quadhelion.engineering/articles/freebsd-synfin.html about FreeBSD syscontrols tunables, dropping SYN FIN and it’s performance impact on webhosting and security, so I searched for that. There are many conf files out there containing this directive and performance in aggregate but I couldn’t find any specific data on a controlled test of just that tunable, so I tested it months ago.

Searched for it Perplexity:

- It gave me a contradictorily worded and badly explained answer with the correct conclusion as from two different people

- None of the sources it claimed said anything* about it’s performance trade-off

- The answers change daily

- One answer one day gave an identical fork of a gist with the authors name in comments in the second line. I went on GitHub and notified the original author. https://gist.github.com/clemensg/8828061?permalink_comment_id=5090233#gistcomment-5090233 Then I went to go back and take a screenshot I would say, maybe 5-10 minutes later and I could not recreate that gist as a source anymore. I figured it would be consistent so I didn’t need to take a screenshot right then!

The forked gist was: https://gist.github.com/gspu/ac748b77fa3c001ef3791478815f7b6a

[Contradiction over time] The impact was none, negligible, trivial, improve

[Errors] Corrected after yesterday, and in following with my comments on the web that it actually improves performance as in my months old article

- It is not minimal -> trivial, it’s a huge decision that has definite and measurable impact on todays web stacks. This is an obvious duh moment once you realize you are changing the TCP stacks and that is hardly ever negligible, certainly never none.

drop_synfinis mainly mitigating fingerprinting, not DOS/DDoS, that’s a SYN flood it’s meaning, but I also tested this in my article!

Anyone feel like an experiment here in this thread and ask ChatGPT the same question for me/us?

So… if you don’t want the world to see your work, why are you hosting it publicly?

If I copy McDonald’s site one by one for my own restaurant and just change the name, you can expect to be sued.

And yet, their site is available publicly?

It all started with this today:

Perplexity AI Is Lying about Their User Agent https://rknight.me/blog/perplexity-ai-is-lying-about-its-user-agent/

Discussion Primer: From my perspective and potential millions of others, the readme is part of the software, it is delivered with the software whether zip, tar, git. Itself, Markdown is a specifiction and can be consider the document as software.

In fact README is so integral to the software you cannot run the software without it.

Conclusion: I think we all think of readme, especially ones with examples of your code in your readme, as code. I have evidence AI trains on your README even if you tell it specifally not to use readme, block readme, block markdowns, it still goes after it. Kinda scary?

I want everyone else to have the evidence I have, Science.

I mean this in the best possible way, but have you ever had any mental health evaluations? I’m not sure if they’re still calling it paranoid schizophrenia, but the way you write makes me concerned.

I write the smartest in the room, passionate, with wisdom and evidence. The way you defame someone like this makes me definitely sure you are not afraid to defame someone’s character with no evidence of anything but your own stupidity and un-awareness.

This is out of genuine concern, my dude. Your other comment accusing me of not being a real person is positively alarming.

Your rapacious backwards insult of caring is gross and obvious. You called me “my dude” like a teenger whose chill, and calm, and correct, but just …a child and wrong in the end. How old are you child? My Lemmy profile is my name with my Seal naturally born March 4th, 1974 as Elias Christopher Griffin. I’ve done more in my life than most people do in 10. My mental health is top 3% as is my intellect.

You are an un-named rando lemmy account named “catloaf” who averages 16 posts a day for the past 4 months with no original posts of your own because you aren’t original.

I make only original posts. You seem nothing like a real person. Want to tell us who you are? What makes you special, outside of the mandated counseling you recieve or data models you intake?

You know what, no one takes what you say seriously loaf of cat, I certainly didn’t, don’t, and won’t. Here is space for your next hairball

I take back the benefit of the doubt I gave in my earlier reply. This reply is as unhinged as the Navy SEAL copypasta. You need mental health support.

This really reads like copy pasta, if someone told me you were an LLM configured to make antiAI people look bad I’d believe them

I think your problem is here:

You should test this out for yourself as I’m not going to take days or a week making a great presentation of a technical case.

You’ve written a whole lot to try to be convincing but ultimately stopped short of actually proving what you’ve alleged. It looks to me you are frustrated that no one is taking you at your word and going down this rabbit hole themselves, when the various reputational elements you’re relying on are going to be important only to a minority of users. Burden of proof works how it always has, however.

I also just realized why I’m getting heat here, lawsuits.

I just gave legal cause that practice was not properly disclosed by Microsoft, abused by OpenAI, a legal grounds as a README.markdown containg code as being software, not speech, integral to licensed software, which is covered by said license.

If an entity does find out like me your technical writing or code is in AI from a README, they are perhaps liable?

The comments so far aren’t real people posting how they really feel. An agenda or automata. Does that tell you I’m over the target or what?

Look my post is doing really well on the cyberescurity exchanges. So to all real developers and program managers out there:

Recommend the removal of any “primary logic” functional code examples out of your

README.md, that’s it.PSA, Here to help, Elias

Lmao you got some criticism and now you’re saying everyone else is a bot or has an agenda. I am a software engineer and my organization does not gain any specific benefits for promoting AI in any way. They don’t sell AI products and never will. We do publish open source work however, and per its license anyone is free to use it for any purpose, AI training included. It’s actually great that our work is in training sets, because it means our users can ask tools like ChatGPT questions and it can usually generate accurate code, at least for the simple cases. Saves us time answering those questions ourselves.

I think that the anti-AI hysteria is stupid virtue signaling for luddites. LLMs are here, whether or not they train on your random project isn’t going to affect them in any meaningful way, there are more than enough fully open source works to train on. Better to have your work included so that the LLM can recommend it to people or answer questions about it.

The way that I see it, LLMs are a powerful tool to quickly and easily generate an output that should then be checked by a human. The problem is that it’s being shoehorned into every product it feasibly can be, often as an unchecked source of truth, by people who don’t understand it and just don’t want to miss out. If at any point you have to simply trust an LLM is “right”, it’s being used wrong.

Yeah this is super sensible. Out of curiosity, do you have any decent examples bad usage? I think chatbots, GitHub copilot type stuff to be fine. I find the rewording applications to be fine. I haven’t used it but Duolingo has an AI mode now and it is questionable sounding, but maybe it is elementary enough and fine tuned well enough for the content in the supported courses that errors are extremely rare or even detectable.

I would say chatbots are bad if their job is to provide accurate information, similarly is their use in search engines. Github on the other hand would be an example of a good use, as the code will be checked by whoever is using it. I also like all the image generation/processing uses, assuming that they aren’t taken as a source of truth.

Chatbots are fine as long as it’s clearly disclosed to the user that anything they generate could be wrong. They’re super useful just as an idea generating machine for example, or even as a starting point for technical questions when you don’t know what the right vocabulary is to describe a problem.

Yeah I was thinking more along the lines of customer support chatbots

Oh yeah those are problematic, but I’m pretty sure a court has ruled in a customer’s favor when the AI fucked up, which is good at least.